VPC can be enabled inside the GCP project (or a default VPC can be chosen to start with).

With VPC, you can provision Cloud Platform resources, connect them to each other, and isolate them from one another.

Google Cloud VPC networks are global, whereas subnets are regional. You can dynamically increase the size of a subnet in a custom network by expanding the range of IP addresses allocated to it. Doing that doesn't affect already configured VMs.

You can use this capability to build solutions that are resilient but still have simple network layouts.

Use big VMs for memory- and compute-intensive operations. The largest GCP VM at the time being has 96 virtual CPUs with 624 Gb of RAM. Use autoscale for resilient, scalable applications.

Preemptible VMs can be chosen to reduce costs (the per-hour price of preemptible VMs incorporates a substantial discount).

Important VPC capabilities

Just like physical networks, VPCs have routing tables. Use its route table to forward traffic within the network, even across subnets. Use a built-in firewall to control what network traffic is allowed.

Use VPC peering to establish interconnections between GCP projects.

Cloud Load Balancing is a fully distributed software-defined managed service for all your traffic. Traffic goes over Google backbone from the closest point of presence to the user. Backends are selected based on load. Only a healthy backend receives traffic. No pre-warming is required for the Load Balancer (LB). Google VPC offers a suite of load-balancing options, including Layer 7 Load balancing, Layer 4 Load balancing of non-HTTPS SSL traffic or non-SSL TCP traffic, etc.

Cloud DNS (8.8.8.8, i.e., public domain service customers don't pay for) is highly available and scalable. You can create managed zones, then add, edit, delete DNS records. You can also programmatically manage zones and records using RESTful API or command-line interface.

Cloud CDN (Content Delivery Network) is available as well. Use Google's globally distributed edge caches to cache content close to your users. You can also use CDN's interconnect if you'd like to use a different CDN(s).

GCP offers many interconnect options:

-

VPN: Secure multi Gbps connection over tunnels

-

Direct peering: Private connection between you and Google for your hybrid cloud workloads

-

Carrier peering: Connection through the largest partner network of service provides

-

Dedicated interconnect: Connect N X 10G transport circuits for private cloud traffic to Google cloud at Google POPs

A network:

-

Has no IP address range

-

Is global and spans all available regions

-

Contains subnetworks

-

Can be of type default, auto mode or custom mode (auto mode network can be converted to custom, but once custom, always custom)

Subnetworks

Subnetworks are for managing resources. Networks have no IP range, so subnetworks don't need to fit into an addressing hierarchy. Instead, subnetworks can be used to group and manage resources. They can represent departments, business functions, or systems.

DNS resolution for internal addresses

Each instance has a hostname that can be resolved to an internal IP address

An internal DNS resolver handles name resolution:

-

Provided as part of Compute Engine

-

Configured for use on instance via DHCP

-

Provides answer for internal and external addresses

An instance (compute) is unaware of the external IP address. Instead, a VPC keeps track of the internal addresses that match external ones in the lookup table.

Network billing:

NOTE: Any feature marked "BETA" has no Service Level Agreement (SLA)

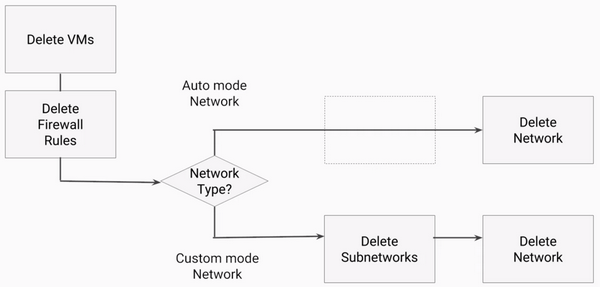

Network deletion rules:

Network lab:

In a few cases, you may try to ping the other machine's internal IP, and it succeeds! Do you know why this would be the case?

Because ... the internal IP of the machine you are using could be the same as the internal IP of the VM in the other network. In this case, the ping would succeed because you are actually pinging your own local VM's interface, not the one on the other VM in the other network. You can't ping an internal IP address that exists in a separate network than your own.

When you create a new auto-mode network, the IP ranges will be identical to the default network ranges. The first address in the range is always reserved for the gateway address. So it is actually likely that the first VM in a zone will have the same address as the first VM in the corresponding zone in another network.

If it didn't work... learn-1 is in the default network, and learn-2 is in the learnauto network. Even though both VMs are located in the same region, us-east1, and in the same zone, us-east-1b, they cannot communicate over internal IP.

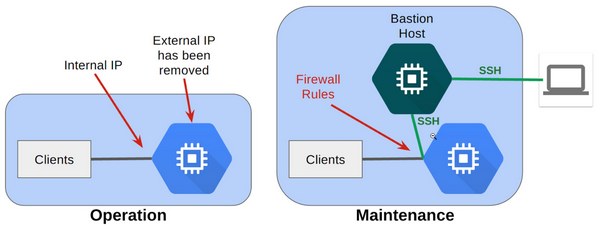

Bastion host

A best practice for infrastructure administration is to limit access to the resources:

During operations, you harden the server by removing its external IP address, which prevents connections from the internet. During maintenance, you start up a bastion host that has an external IP address. You then connect via SSH to the bastion host and the server over the internal IP address. You can further restrict access to firewall rules.

Quiz:

-

True or False: Google Cloud Load Balancing allows you to balance HTTP-based traffic across multiple Compute Engine regions. True

-

Which statement is true about Google VPC networks and subnets? Networks are global; subnets are regional

-

An application running in a Compute Engine virtual machine needs high-performance scratch space. Which type of storage meets this need? Local SSD

-

Choose an application that would be suitable for running in a Preemptible VM. A batch job that can be checkpointed and restarted

-

How do Compute Engine customers choose between big VMs and many VMs? Use big VMs for in-memory databases and CPU-intensive analytics; use many VMs for fault tolerance and elasticity

-

How do VPC routers and firewalls work? Google manages them as a built-in feature

-

A GCP customer wants to load-balance traffic among the back-end VMs that form part of a multi-tier application. Which load-balancing option should this customer choose? The regional internal load balancer

-

For which of these interconnect options is a Service Level Agreement available? Dedicated Interconnect

-

What is a key distinguishing feature of networking in the Google Cloud Platform? Network topology is not dependent on address layout

-

What are the three types of networks offered in the Google Cloud Platform? Default network, auto network, and custom network

-

What is one benefit of applying firewall rules by tag rather than by address? When a VM is created with a matching tag, the firewall rules apply irrespective of the IP address it is assigned

-

What is the purpose of Virtual Private Networking (VPN)? To enable a secure communication method (a tunnel) to connect two trusted environments through an untrusted environment, such as the Internet

-

Why might you use Cloud Interconnect or Direct Peering instead of a VPN? Cloud Interconnect and Direct Peering can provide higher availability, lower latency, and lower cost for data-intensive applications

-

What is the purpose of a Cloud Router, and why does that matter? It implements a dynamic VPN that allows topology to be discovered and shared automatically, which reduces manual static route maintenance

-

How does the autoscaler resolve conflicts between multiple scaling policies?

-

The following command enables autoscaling for a managed instance group using CPU Utilization: gcloud compute instance-groups managed set-autoscaling example-managed-instance-group --max-num-replicas 20 --target-cpu-utilization 0.75 --cool-down-period 90 Which of the following statements correctly explains what the command is creating?

-

Which statement is true of autoscaling custom metrics.

GCP Storage options

Every application needs to store data, may it be media to be streamed, or sensor data. In GCP, core storage options are:

-

Cloud Storage

-

Cloud SQL

-

Cloud Spanner

-

Cloud Data Store

-

Google Big Table

Cloud Storage is binary-large object storage. It has high performance, internet-scale, simple administration, and does not require capacity management. Capacity does not need to be provisioned ahead of time. GCP sores your objects with high durability and availability. It can be used to form many different purposes: storing static website data, distributing large objects to the end-users through downloading, etc. Cloud Storage is not a file system because each object there has a URL. Cloud Storage is comprised of buckets. You create and store your objects in there. The stored objects are immutable (i.e., cannot be edited in place); instead, you create new versions. Cloud Storage encrypt your data at rest and in transit (out of the box capability). GCP also offers lifecycle management for the Cloud Storage objects (deleting outdated versions). A newer version of the object always overrode an old one.

Cloud Storage offers four different types of storage classes:

-

Regional (Most frequently accessed, 99.95%). Good for content storage and delivery.

-

Multi-regional (Accessed frequently with a region, 99.90%). Good for in-region analytics transcoding.

-

Nearline (Accessed less than once a month, 99.00%). Long-tail content, backups.

-

Coldline (Accessed less than once a year, 99.00%). Archiving, disaster recovery purposes.

There are several ways to bring data to Cloud Storage:

-

Online transfer (using command-line tools like 'gsutil')

-

Storage transfer service (scheduled and managed batch transfers)

-

Transfer appliance, beta (rackable appliance to securely ship your data). You can transfer up to a Petabyte of data that way. The closest analog is a Snowball service at AWS.

NOTE: A disk can be resized even when it attached to the VM. You can grow disk for the compute instance but never shrink it. You can edit edit a persistent disk, increase size and increase IOPS capacity.

Quiz:

-

Your Cloud Storage objects live in buckets. Which of these characteristics do you define on a per-bucket basis? Choose all that are correct (3 correct answers). A geographic location. A default storage class. A globally-unique name.

-

Cloud Storage is well suited to providing the root file system of a Linux virtual machine. False.

-

Why would a customer consider the Coldline storage class? To save money on storing infrequently accessed data.

-

What is a fundamental difference between a snapshot of a boot persistent disk and a custom image? A snapshot is locked within a project, but a custom image can be shared between projects.

-

What happens when a custom image is marked "Obsolete"? No new projects can use the custom image, but those already with the image can continue to use it.

-

From where can you import boot disk images for Compute Engine (select 3)?

Cloud BigTable and Cloud Datastore

Cloud BigTable is Google's

NoSQL big data database service; in other words - it is a managed NoSQL. It offers a wide-column database service for terabyte applications. It is accessed through HBase APIs and natively compatible with big data Hadoop ecosystem (more about Hadoop in my blog:

https://strive2code.net/?tag=Hadoop). Bigtable drives major applications such as Google Analytics and Gmail.

Cloud Datastore is a horizontally scalable NoSQL database. It is designed for application backends. It automatically handles sharding and replication; unlike BigTable, it supports transactions and has a free daily quota. Cloud Datastore provides myriad capabilities such as ACID transactions, SQL-like queries, indexes, and much more.

Quiz:

-

Each table in NoSQL databases, such as Cloud Bigtable, has a single schema that is enforced by the database engine itself. False.

-

Some developers think of Cloud Bigtable as a persistent hashtable. What does that mean? Each item in the database can be sparsely populated and is looked up with a single key.

-

How are Cloud Datastore and Cloud Bigtable alike? Choose all that are correct (2 correct answers). They are both NoSQL databases. They are both highly scalable.

-

Cloud Datastore databases can span App Engine and Compute Engine applications. True.

Cloud SQL and Cloud Spanner

Cloud SQL is a managed RDBMS. It offers MySQL and PostgreSQL databases as a service that helps with transactional data. Both database servers are capable of storing of Terabytes of data. There are multiple benefits of using managed service:

-

Automatic replication between multiple zones

-

Horizontal scaling (read)

-

Google security (customer data is encrypted, including backups)

-

Compute instances can be authorized to use Cloud SQL service and configure it to be in the same zone

Cloud Spanner is a horizontally scalable RDBMS. It offers strong global consistency and managed instances with high availability. It can provide Petabytes of capacity. Consider using it if you have outgrown existing relational database or sharding your database(s) for throughput and high performance, need transactional consistency, or just want to consolidate your database.

Quiz:

-

Which database service can scale to higher database sizes? Cloud Spanner can scale to petabyte database sizes, while Cloud SQL is limited by the size of the database instances you choose. At the time this quiz was created, the maximum was 10,230 GB.

-

Which database service presents a MySQL or PostgreSQL interface to clients? Each Cloud SQL database is configured at creation time for either MySQL or PostgreSQL. Cloud Spanner uses ANSI SQL 2011 with extensions.

-

Which database service offers transactional consistency on a global scale? Cloud Spanner.

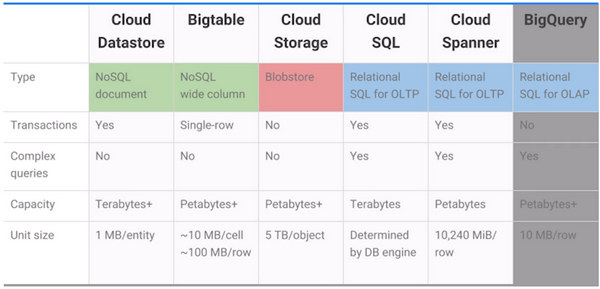

Comparing storage options:

-

Consider using Cloud Datastore if you need to store unstructured objects or if you require support for transactions and SQ like queries. This storage service provides terabytes of capacity with a maximum unit size of one megabyte per entity. It is the best for semi-structured application data that is used in app engines applications.

-

Consider using Cloud Bigtable if you need to store a large number of structured objects. Cloud Bigtable does not support SQL queries, nor does it support multi-row transactions. This storage service provides petabytes of capacity with a maximum unit size of 10 Megabytes per cell and 100 Megabytes per row. It is best for analytical data with heavy read/write events like AdTech, Financial, or IoT data.

-

Consider using Cloud Storage if you need to store immutable blobs larger than 10 megabytes, such as large images or movies. This storage service provides petabytes of capacity with a maximum unit size of five terabytes per object. It is best for structured and unstructured, binary or object data like images, large media files, and backups

-

Consider using Cloud SQL or Cloud Spanner if you need full SQL support for an online transaction processing system. Cloud SQL provides terabytes of capacity, while Cloud Spanner provides Petabytes. If Cloud SQL does not fit your requirements because you need horizontal scalability, not just through the replicas, consider using Cloud Spanner. Cloud Spanner is the best for web frameworks and in existing applications like storing user credentials and customer orders. Cloud Spanner is best for large scale database applications that are larger than two terabytes, for example, for financial trading and e-commerce use cases

-

The usual reason to store data in BigQuery is to use its big data analysis and interactive query and capabilities. You would not want to use BigQuery, for example, as the backings store for an online application

Quiz:

-

You are developing an application that transcodes large video files. Which storage option is the best choice for your application? Cloud Storage.

-

You manufacture devices with sensors and need to stream huge amounts of data from these devices to a storage option in the cloud. Which Google Cloud Platform storage option is the best choice for your application? Cloud Bigtable.

-

Which statement is true about objects in Cloud Storage? They are immutable, and new versions overwrite old unless you turn on versioning.

-

You are building a small application. If possible, you'd like this application's data storage to be at no additional charge. Which service has a free daily quota, separate from any free trials? Cloud Datastore.

-

How do the Nearline and Coldline storage classes differ from Multi-regional and Regional? Choose all that are correct (2 responses). Nearline and Coldline assess lower storage fees. Nearline and Coldline assess additional retrieval fees.

-

Your application needs a relational database, and it expects to talk to MySQL. Which storage option is the best choice for your application? Cloud SQL.

-

Your application needs to store data with strong transactional consistency, and you want seamless scaling up. Which storage option is the best choice for your application? Cloud Spanner.

-

Which GCP storage service is often the ingestion point for data being moved into the cloud, and is frequently the long-term storage location for data? Cloud Storage.

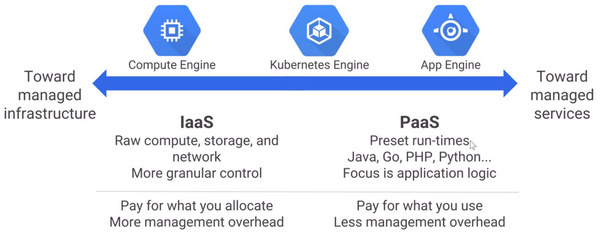

Containers

Another choice for organizing your compute is using Containers rather than Virtual Machines and using Kubernetes Engine to manage them. Containers are simple and interoperable, and they enable seamless fine-grained scaling:

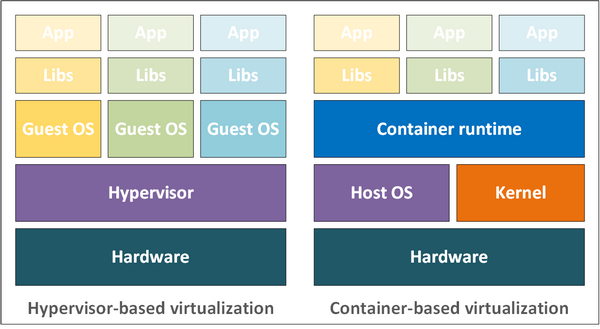

Container-based virtualization is an alternative to hardware virtualization as in traditional virtual machines. Virtual machines are isolated from one another in part by having each virtual machine have its own instance of the operating system. But, operating systems can be slow to boot and can be resource-heavy. Containers respond to these problems by using modern operating systems, built-in capabilities to isolate environments from one another:

A process is a running program. In Linux and Windows, the memory address spaces of running processes have long been isolated from one another. Popular implementations of software containers build on this isolation; they take advantage of additional operating system features. They give processors the capability to have their own namespaces. They give a supervisor process the ability to limit other processes' access to resources. Containers start much faster than virtual machines and use fewer resources because each container does not have its own instance of the operating system. Instead, developers configure each container with a minimal set of software libraries to do the job. A lightweight container runtime does the plumbing jobs needed to allow that container to launch and run, calling into the kernel as necessary. The container runtime also determines the image format. Kubernetes engine uses the docker container runtime.

What does the container provide that a virtual machine does not?

-

Consistency across development, testing, and production environments

-

Loose coupling between application and operating system layers (containers are easier to move around as they don't have host OS installed in them)

-

Workload migration simplified between on-premises and cloud environments

-

Agile development and operations

Quiz:

-

Each container has its own instance of an operating system. False.

-

Containers are loosely coupled to their environments. What does that mean? Choose all the statements that are true. (3 correct answers):

-

Deploying a containerized application consumes fewer resources and is less error-prone than deploying an application in virtual machines

-

Containers abstract away unimportant details of their environments

-

Containers are easy to move around

Kubernetes

Although containers are a very efficient abstraction, anybody who uses them still needs a scalable way to run them and manage them. For its own internal operations, Google built a cluster management networking and naming system to allow container technology to operate at Google scale. It built on what it learned to create Kubernetes, and it has offered Kubernetes to the world as open-source. Many companies want to work in a multi-cloud world, and apps containerization allows them to achieve this goal.

Kubernetes is a container cluster management system. It operates with Pods. Pod is a group of containers that are deployed together with guaranteed network access. Kubernetes eases application management. It makes applications more elastic by offering multi-zone clustering, load balancing, and autoscaling (in terms of GCP).

Quiz 1:

-

What is a Kubernetes Pod? In Kubernetes, a group of one or more containers is called a pod. Containers in a pod are deployed together. They are started, stopped, and replicated as a group. The simplest workload that Kubernetes can deploy is a pod that consists only of a single container.

-

What is a Kubernetes cluster? A Kubernetes cluster is a group of machines where Kubernetes can schedule containers in pods. The machines in the cluster are called “nodes.”

Kubernetes engine manages and runs containers. It represents a fully managed system for running containers and uses Compute Instances and resources. At the same time, it's declarative, meaning that you declare your desired application configuration, and the K8 engine implements and manages it.

Quiz 2:

-

Where do the resources used to build Kubernetes Engine clusters come from? Because the resources used to build Kubernetes Engine clusters come from Compute Engine, Kubernetes Engine takes advantage of Compute Engine’s and Google VPC’s capabilities.

-

Google keeps Kubernetes Engine refreshed with successive versions of Kubernetes. True. The Kubernetes Engine team periodically performs automatic upgrades of your cluster master to newer stable versions of Kubernetes, and you can enable automatic node upgrades too.

-

Identify two reasons for deploying applications using containers. (Choose 2 responses.) Simpler to migrate workloads. Consistency across development, testing, production environments.

-

Kubernetes allows you to manage container clusters in multiple cloud providers. True.

-

Google Cloud Platform provides a secure, high-speed container image storage service for use with Kubernetes Engine. True.

-

In Kubernetes, what does "pod" refer to? A group of containers that work together.

-

Does Google Cloud Platform offer its own tool for building containers (other than the ordinary docker command)? Yes, the GCP-provided tool is an option, but customers may choose not to use it.

-

Where do your Kubernetes Engine workloads run? In clusters built from Compute Engine virtual machines.

Kubernetes is my favorite area, and even though besides the cert preparation, I can talk about it for hours, I have to stop here. I will be releasing the second part of this article soon to make the reading less condensed and overwhelming.

Ciao and see you soon, stay tuned.

References: